Background

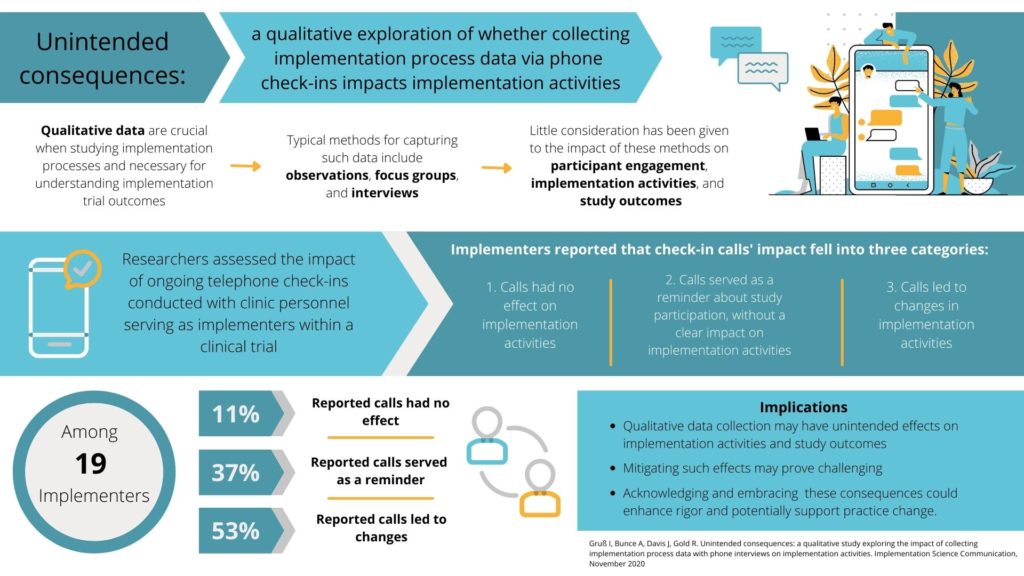

Qualitative data are crucial when studying implementation processes, and thus necessary for understanding implementation trial outcomes. Typical methods for capturing such data include observations, focus groups, and interviews. However, little consideration has been given to how such methods create interactions between researchers and study participants which could affect participants’ engagement in study-related implementation activities, and thus study outcomes. To better understand these potential impacts, researchers assessed whether and how ongoing telephone check-ins conducted with clinic personnel who were serving as study implementers within a clinical trial – calls designed to capture qualitative data about implementation activities – might have inadvertently impacted the quality of the data collected and participants’ engagement in study activities.

Useful Findings

The clinic study implementers reported that the check-in calls’ impact fell into three categories: (1) they had no effect on implementation activities, (2) they served as a reminder about study participation, without having a clear impact on implementation activities, and (3) the check-in calls led to changes in implementation activities. Some study implementers reported that anticipation of the check-in calls also improved their ability to recount implementation activities and positively affected quality of the data collected. The study investigators similarly perceived that the phone check-ins served as reminders and encouraged some implementers’ engagement in related activities; the calls’ ongoing nature also created personal connections between study staff and study implementers which might have impacted implementation activities.

Bottom Line

This study, published in Implementation Science Communications, illustrates the potential for qualitative data collection to have unintended effects on implementation activities, and possibly on study outcomes, and underscore the complexities of capturing such data in a minimally impactful manner. Mitigating such effects may prove challenging, but acknowledging these consequences—embracing them, perhaps by designing data collection methods as implementation strategies—could enhance scientific rigor.